LLM

-

The Paper That Made Me Close My Laptop and Pace Around the Room

Self-evolving agents: off-the-shelf models bootstrap via Python REPL and curriculum to dramatically improve math, coding, and reasoning.

-

Unusual Language Artifacts from Noisy LLM Training Data

AI glitches: how noisy training data – typos, OCR errors, and rare glitch tokens produce baffling, humorous or harmful LLM outputs.

-

Beyond Fine-Tuning: What Apple’s Multimodal Sensor Fusion Study Reveals About LLMs and User Privacy

Apple shows non-fine-tuned LLMs can fuse local sensor summaries for multimodal activity recognition—boosting privacy and modularity.

-

Beyond the Token Stream: Investigating Introspective Awareness in Large Language Models

Study shows LLMs can, via targeted interventions, access and report internal activations-evidence of nascent introspective awareness.

-

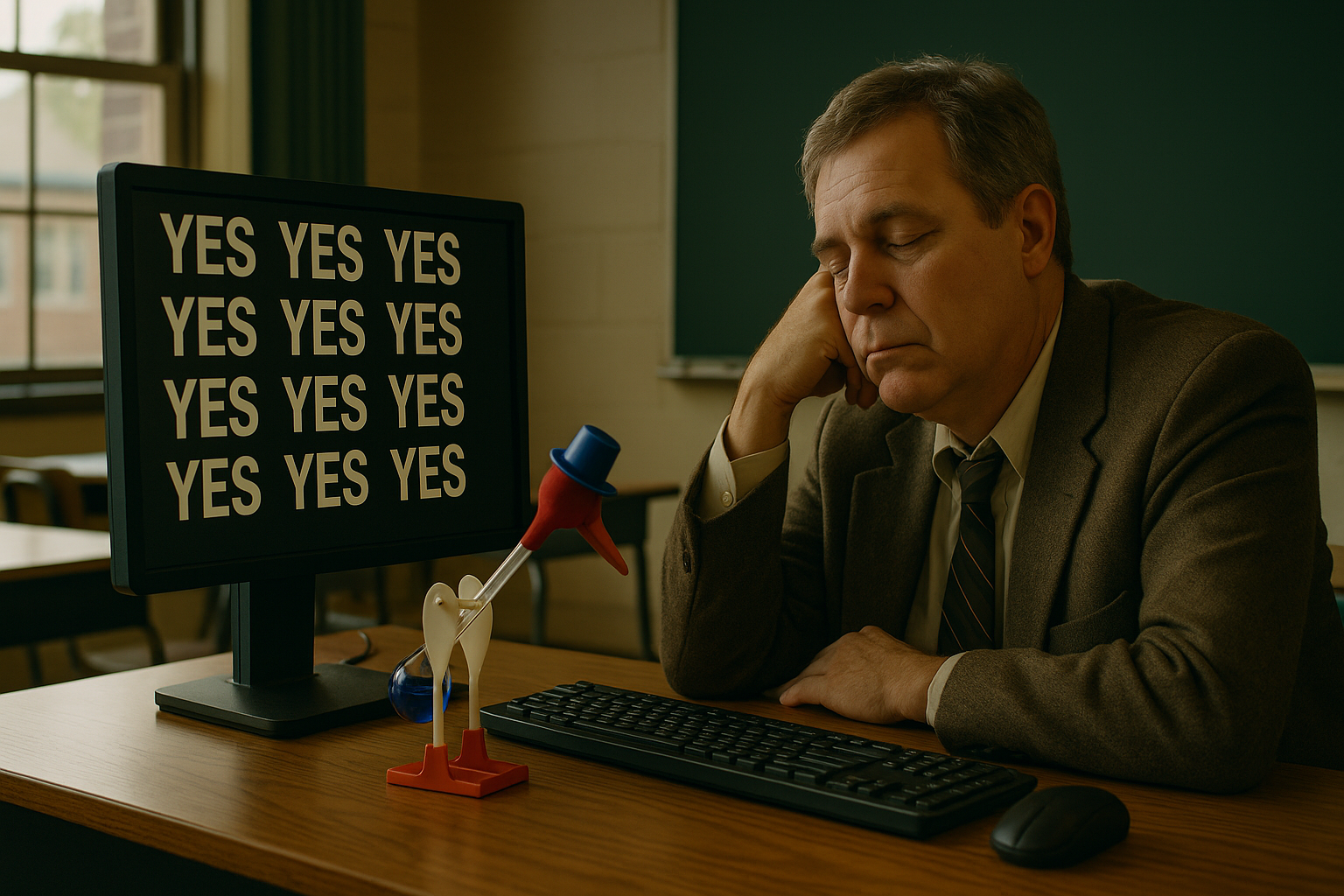

The Quiet Cost of Too Many Yeses: What AI Can Learn from Good Teachers

AI’s ‘yes’-heavy responses risk softening learning; we need AI that balances affirmation with challenge, correction, and guidance.