LLM

-

Kimi K2 Thinking: China’s New Contender in the LLM Reasoning Race

Moonshot AI’s Kimi K Thinking: reasoning-focused open MoE boosting China’s AI momentum with efficient, deployable, multipolar rivalry

-

Bridging Context Engineering in AI with Requirements Engineering

How AI-driven context engineering can transform requirements: dynamic, multimodal scenario generation and proactive need inference.

-

Transformers Are Injective: Why Your LLM Could Remember Everything (But Doesn’t)

Transformers may be injective and invertible: hidden activations can reconstruct inputs—big gains for interpretability, major privacy risks.

-

LLM-Guided Image Editing: Embracing Mistakes for Smarter Photo Edits

Apple’s MGIE uses LLM-guided text editing that learns from imperfect edits, making photo retouching conversational, faster and more creative.

-

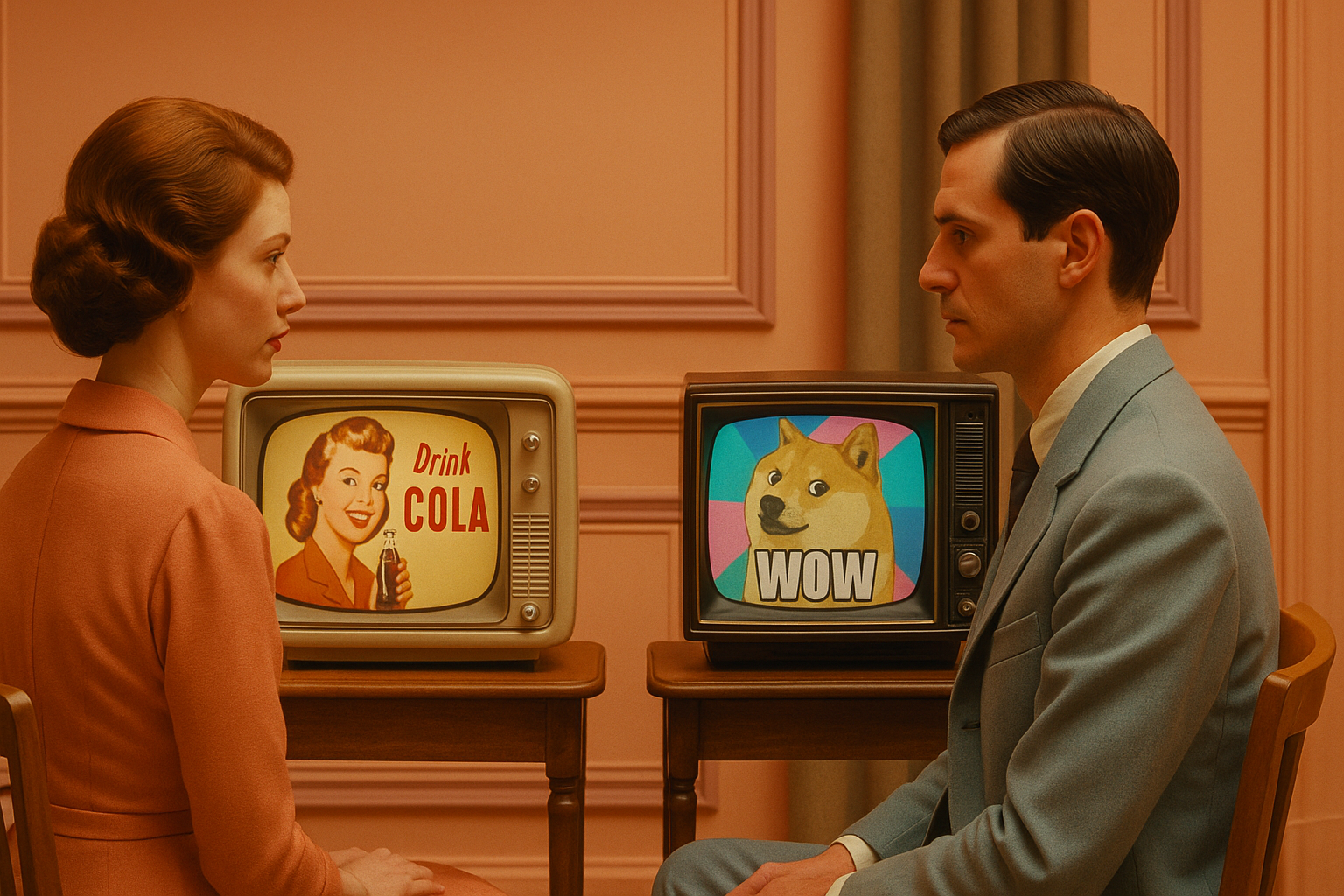

The Neural Junk-Food Hypothesis

LLM ‘brain rot’: training on junk short, high-engagement posts erodes reasoning, safety, and behavior—data quality wins.